ComfyUI Manager (Recommended)

Install ComfyStream directly into an existing ComfyUI setup.

Docker (Self-contained)

Run ComfyStream and ComfyUI together in a prebuilt Docker container.

Install with ComfyUI Manager

If you already have ComfyUI installed, the easiest way to install ComfyStream is via the built-in ComfyUI Manager.Install ComfyUI (if needed)

Download and install ComfyUI if you haven’t already.

Currently comfystream works only with the hiddenswitch

fork of ComfyUI, the team is

actively working add full support for official

ComfyUI.

ComfyUI must be run with frontend version

v1.24.2 or older. You can do this by launching ComfyUI with the flag --front-end-version Comfy-Org/ComfyUI_frontend@v1.24.2Install ComfyUI Manager (if needed)

Follow the ComfyUI Manager installation guide if you haven’t already.

Install ComfyStream via Manager

- Launch ComfyUI.

- Open the Manager tab.

- Search for ComfyStream and click Install.

Manual Installation

If you prefer more control over the installation process, you can install ComfyStream manually using one of the following methods:Python 3.12 or greater is required

Install ComfyStream via comfy-cli

Install ComfyStream via comfy-cli

The following commands install the latest version of ComfyUI and ComfyStreamAfter completing the installation, start ComfyUI with the following commandThis will start the ComfyUI server with ComfyStream installed.

Cloning Repository

Cloning Repository

If you prefer to install ComfyStream manually by cloning the repository instead of using the Manager, follow these steps:After completing the installation, navigate to the root of the ComfyUI directory.This will start the ComfyUI server with ComfyStream installed.

Install with Docker

Run ComfyStream in a prebuilt Docker environment, either on your own GPU or a cloud server.Local GPU

Run ComfyStream locally with your own GPU.

Remote GPU

Deploy ComfyStream on a remote GPU using RunPod or Ansible.

Local GPU

If you have a compatible GPU on Windows or Linux, you can run ComfyStream locally via Docker.Prerequisites

First, install the required system software:Windows

Windows

Install WSL 2

- From a new command prompt, run:

wsl --install- Update WSL if needed:wsl.exe --update

Install NVIDIA CUDA Toolkit

Inside of WSL, install NVIDIA CUDA

Toolkit

Install Docker Desktop

Install Docker

Desktop.

Run the Docker Container

Create model and output directories

These folders store your models and generated outputs. Docker mounts them into the container.

Run the container

Available flags:

--download-modelsdownloads some default models--build-enginesoptimizes the runtime for your GPU--serverstarts ComfyUI server (accessible on port 8188)--apienables the API server--uistarts the ComfyStream UI (accessible on port 3000)--use-volumeshould be used with a mount point at /app/storage. It is used during startup to save/load models and compiled engines to a host volume mount for persistence

--download-models and --build-engines flags are only needed the first time (or when adding new models).Access ComfyUI

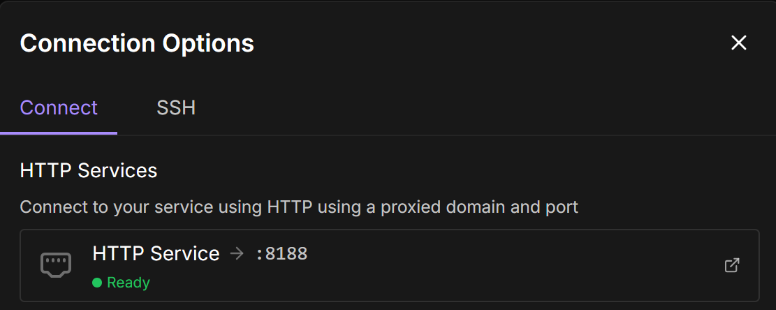

Open your browser and go to http://localhost:8188 to start using ComfyUI with ComfyStream.

Access ComfyStream UI

The ComfyStream UI is available at http://localhost:3000 where you can start live streams directly by keeping the stream URL as

http://localhost:8889 and selecting a workflow.

Remote GPU

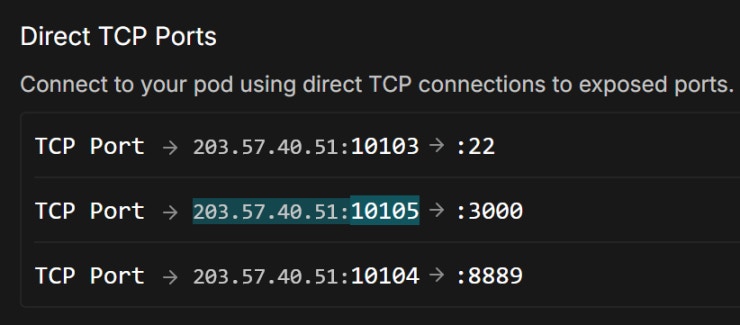

If you don’t have a local GPU, you can run ComfyStream on a cloud server. Choose between a managed deployment with RunPod or manual setup using Ansible.Run with RunPod

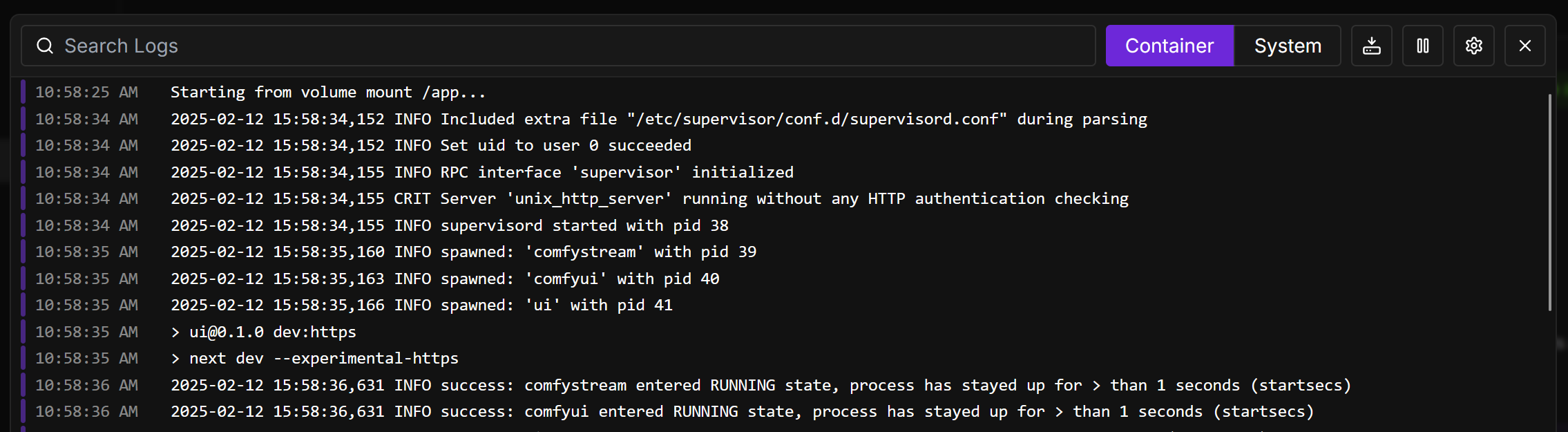

RunPod provides a simple one-click deployment of ComfyStream in a managed container environment — perfect for testing or avoiding manual setup.Launch the RunPod template

Use this template to get started:

livepeer-comfystream

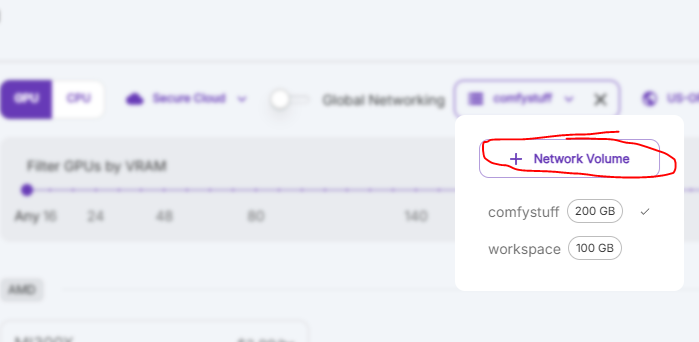

RunPod does not persist pod data by default. To ensure models and engines persist across pod restarts, use the RunPod template livepeer-comfystream-volumeThis template uses the

--use-volume flag to save all models and engines to the mount path /app/storage. A network volume is required.Deploy with Ansible

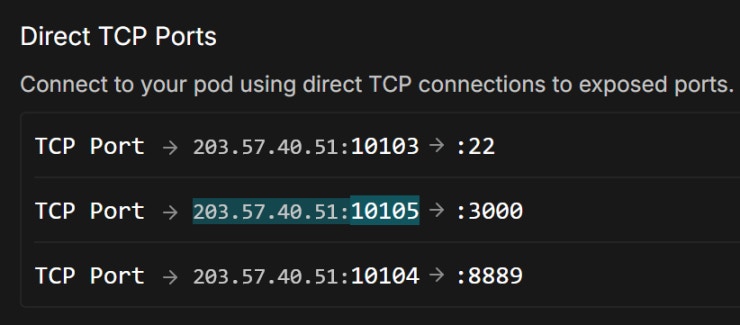

Use Ansible to deploy ComfyStream to your own cloud VM (like AWS, GCP, Azure, or TensorDock). Great if you want more control or a repeatable, fully automated setup.Open required ports

Allow incoming traffic on:

- 22 (SSH – internal port)

- 8189 (ComfyStream HTTPS – internal port)

Ensure your cloud provider maps these to public ports. Internal port 8189 is proxied to 8188, which serves the ComfyUI interface.

Install Ansible

Install Ansible on your local machine (not the VM). See the official installation guide.

Clone the ComfyStream playbook

No need to install anything on the VM — Ansible sets it all up over SSH.

Set a custom password (optional)

Edit the

comfyui_password value in plays/setup_comfystream.yaml.This sets the password used to access ComfyUI in your browser.

Run the playbook

If you’re not using a root user, add

--ask-become-pass to enter your sudo password.The playbook pulls a ~20GB Docker image on the VM. This may take a while on first run.

Monitor download progress (optional)

If needed, SSH into the VM and run:

Helpful if you’re unsure whether the download is progressing during first-time setup.

Accessing ComfyStream

-

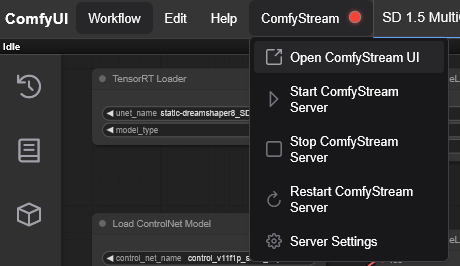

Click the ComfyStream menu button in ComfyUI:

-

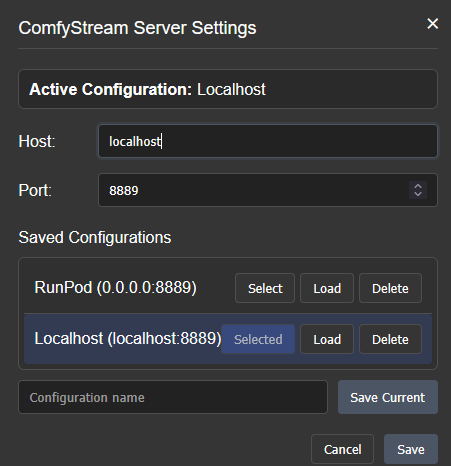

Open Server Settings to verify that ComfyStream is configured to bind to the correct interface and port.

For remote environments like RunPod, set the Host to

0.0.0.0.

- Click Save to apply the settings.

-

Open the ComfyStream menu again and click Start ComfyStream Server. Wait for the server status indicator to turn green.

You can monitor ComfyStream server logs in the ComfyUI log terminal tab.

-

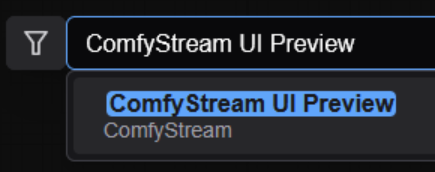

Click Open ComfyStream UI to launch it in a new tab. Alternatively, double-click the node graph and search for ComfyStream UI Preview.

Troubleshooting

Docker Hub Rate Limits (toomanyrequests error)

Docker Hub Rate Limits (toomanyrequests error)

If you encounter a

toomanyrequests error while pulling the Docker image, either

wait a few minutes or provide your Docker credentials when running the playbook: